By Kim Xi Harris

Founder & CEO, Lex Arca Legal Vault | Calculate Your Firm’s Billing Leakage calculator.lex-arca.com

According to Clio’s 2026 Legal Trends Report for Solo and Small Law Firms (May 2026), 71% of solo practitioners and 75% of small firms are now using AI to complete legal work — yet fewer than 33% have seen any revenue increase from it, compared to nearly 60% of enterprise firms. The gap between AI adoption and AI compliance is not a policy problem. It is an architecture problem.

Most legal AI compliance frameworks being sold to attorneys in 2026 share a structural flaw: they treat compliance as something you perform after AI generates output, rather than something your platform enforces before output is ever produced. The difference between those two approaches is not a matter of thoroughness. It is the difference between documented professional oversight and optimistic after-the-fact review — and courts are beginning to notice.

Why Do After-the-Fact AI Verification Frameworks Keep Failing Attorneys?

After-the-fact AI verification frameworks fail attorneys because they place the burden of compliance on attorney behavior rather than platform architecture. A verification checklist requires attorneys to remember to apply it, apply it correctly, and apply it consistently — across every matter, every filing, every AI-assisted research session. Human behavior at scale is not a reliable compliance mechanism. Architecture is.

The sanctions record in 2026 makes this clear. A DOJ attorney was terminated in March 2026 after fabricated AI-generated citations appeared in a federal brief — discovered not by a supervising partner running a verification checklist, but by the pro se plaintiff on the other side. An $86,000 sanctions award has been entered against a Florida attorney whose AI-assisted submissions contained invented case law. A 350-person firm saw three attorneys disqualified and referred to state bars after AI fabrications made it into filed documents.

In each instance, the failure was not that the attorney lacked a process. It is that the process lived in policy — not in the platform.

What Does ABA Formal Opinion 512 Actually Require, and Can a Checklist Satisfy It?

ABA Formal Opinion 512 requires attorneys to exercise “an appropriate degree of independent verification” over all AI-generated work product before it is used in a matter. Enforced under Model Rules 1.1, 1.4, and 1.5, this obligation cannot be delegated to a vendor, a paralegal, or a support workflow. The licensed attorney is personally accountable.

A checklist can remind you to verify. It cannot prove that you did.

That distinction is where most legal AI platforms leave attorneys exposed. A verification policy lives in a document somewhere. A verification attestation — signed, datestamped, exportable, and attached to the file — is documented proof of attorney oversight at the moment of use. ABA Opinion 512 does not require a policy. It requires documented professional judgment. Those are not the same thing.

What Is Compliance-by-Architecture, and How Does It Differ From a Verification Policy?

Compliance-by-architecture means the legal AI platform enforces compliance obligations structurally — before strategy is generated, not after it is reviewed. Rather than asking attorneys to verify output against a checklist, the platform runs a jurisdictional gate before any AI synthesis begins.

In Lex Arca Legal Vault’s Case Premise Intelligence Engine, this gate is called the Sentinel. Before the platform synthesizes a single strategic pattern from an attorney’s case premise, Sentinel checks the assigned court and judge against a standing orders database. If that jurisdiction carries an AI filing restriction, a mandatory disclosure order, or an outright prohibition on AI-assisted submissions, strategy synthesis stops. No output is generated. There is nothing to verify because the platform has already identified — and blocked — a non-compliant workflow before it could begin.

As of early 2026, more than 300 standing court orders govern AI-assisted filings across U.S. federal and state courts, a number that grew by more than 200 in the second half of 2025 alone. Attorneys relying on manual verification to navigate this landscape are managing a compliance obligation that is expanding faster than any individual practitioner can reliably track.

How Does Lex Arca’s Proof Chain Satisfy Attorney Oversight Requirements Under ABA Opinion 512?

Every other platform gives you a policy. Lex Arca gives you a proof chain.

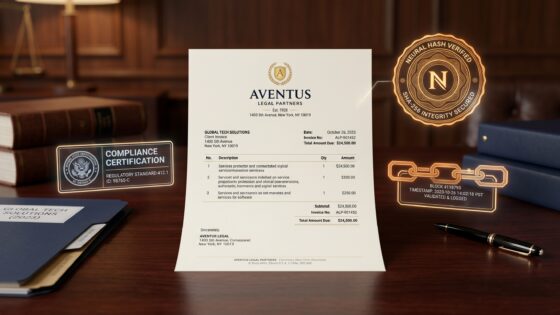

Lex Arca Legal Vault generates a four-step, cryptographically timestamped compliance proof chain on every CPIE session — not a policy, and not a reminder to verify. The chain is sequential and cannot be altered:

- Sentinel Gate — Court ID and judge are checked against the standing orders database. Non-compliant jurisdictions are blocked. The gate result is logged.

- CPIE Synthesis — An AI Intelligence Brief is generated against the cleared case premise, vault document metadata, and attorney strategic annotations. Output is surfaced for attorney review and professional evaluation — not for direct filing.

- Verification Attestation — The attorney reviews the Intelligence Brief and affirmatively attests to independent professional judgment. The attestation generates a signed, datestamped, exportable PDF — formatted for direct attachment to any filing as documented proof of attorney oversight. This certificate references ABA Formal Opinion 512, Model Rules 1.1, 1.4, and 1.5 by name.

- Activity Log Entry — The session is recorded in the platform’s append-only, tamper-evident activity log — cryptographically timestamped, tied to the matter name, attorney of record, and session timestamp. Simultaneously, the session logs as a billable activity in Neural Billing with the same cryptographic anchor.

The compliance record is not reconstructed from memory later. It is generated at the moment of use, by the platform, as an architectural output of the workflow itself.

Which Attorneys Are Most Exposed by Compliance-as-Policy Frameworks?

Solo practitioners and small litigation firms are the most exposed. At larger firms, compliance policies can be administered by dedicated legal operations staff, practice group chairs, and multi-layer supervision workflows. A solo attorney or a two-person litigation firm has no such infrastructure. If the platform does not enforce compliance, no one else will.

Yet 75% of U.S. attorneys are currently using AI in their practice, while only 25% have received any formal AI ethics training, and 44% of law firms lack a documented AI governance policy entirely. The verification burden is falling on the practitioners least equipped to manage it manually — and the sanctions record reflects exactly that distribution.

Lex Arca Legal Vault was architected specifically for the 400,000 solo practitioners and small-firm litigators that enterprise legal AI platforms price out and compliance frameworks underserve. The platform’s local-first private vault architecture means client data is architecturally excluded from Lex Arca’s infrastructure — the compliance proof chain, the jurisdictional gate, and the attorney attestation workflow are available to the solo practitioner at the same level of structural rigor as any enterprise deployment.

Key Takeaways

- As of 2026, more than 300 standing court orders govern AI-assisted filings across U.S. courts — a compliance landscape expanding faster than any manual verification process can reliably track.

- ABA Formal Opinion 512 requires documented proof of independent attorney judgment over AI output, enforced under Model Rules 1.1, 1.4, and 1.5 — a verification checklist satisfies the reminder, not the requirement.

- Attorneys operating without a compliance-by-architecture platform should audit which AI-active jurisdictions they currently practice in and verify whether their platform’s output generates any documented attestation record.

- Lex Arca Legal Vault provides a four-step cryptographically timestamped compliance proof chain — Sentinel jurisdictional gate, CPIE synthesis, attorney-signed Verification Attestation certificate, and an append-only, tamper-evident activity log — designed to satisfy ABA Opinion 512 compliance requirements at the moment of use, not after the fact.

- Calculate your firm’s billing leakage and get early access to the Founding Firms VIP cohort at calculator.lex-arca.com.

About the Author

Kim Xi Harris is the Founder and CEO of Lex Arca Legal Vault, an AI-native litigation intelligence and compliance platform for solo and small-firm attorneys. She is a Cornell Women’s Entrepreneur Program graduate, SBA Women in Business Champion Award recipient, WOSB certified, and holds five Google AI certifications. Calculate your firm’s billing leakage and join the VIP waitlist at calculator.lex-arca.com — or reach us at legalvault@lex-arca.com.